2021 Retrospective

As the 671th day of 2020 is finally drawing to a close, we find ourselves at the turn of yet another page, which promises nothing but misery and sorrow. Crows feeding on thousands of corpses hanging upside down. Oh wait a second, this is the introduction of a rather different post of mine, just unread that please, it’s too late to delete it now.

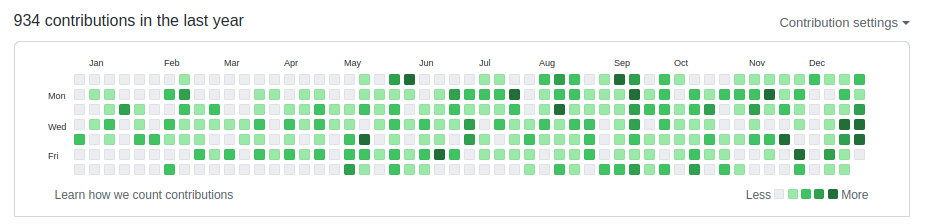

On a more serious note, let us take awe a gander at the traditional year-in-review commit chart, graciously generated by none other than the mighty GitHub and let the data speak for itself.

The avid readers of this blog, or rather the more observant ones, will immediately notice how number of contributions is significantly lower compared to previous years. And what is the reason for that? Well, the explanation is rather simple I’m afraid, it’s the simple fact that I managed to put in a lot fewer hours into my various side projects.

Those of you who happen to know me personally in some capacity, also will surely know that I always have a number of side projects that I am actively working on at any give time. It is almost like an endless queue of ideas that are yet to be realized and unleashed into the world. That reminds me that I really have to clean up my repositories (144!) on GitHub, because things are getting out of hand.

OLEN Games

While I got a lot less done this past year, I did manage to complete a couple of research cycles when it comes to two of my most important projects of which you heard me babble over the years in various of forms and incarnations.

What am I speaking of? The portable 2D/3D game engine and framework, and more recently the self-contained portable build automation tool designed for projects primarily written in C/C++.

These two bad boys are at the top of my totem pole, and in 2021 I finally managed to figure out the direction, style and general scope of them. My great hope for 2022 is to make some serious headway on both, instead of my traditional style of it’s done, when it’s done.

This is where OLEN Games is going to come into play as an umbrella of sorts, which will let me separate these two (and more!) bad boys from the rest of my experimental work and put them into more of a front-and-center position so to speak.

Having an umbrella like this has the added benefit of simplifying the process of naming things. How do people even name their children? In my case, I will just roll with OLEN Engine and OLEN Build. Tada! Naming crisis graciously averted.

I always tend of blabber on and on about technology, but I am building most of it in order to finally be able to build a proper game or two in the near future. Building an engine, without building any games in-house with it, is a very very dangerous and ill advised thing to do, and I highly recommend anyone against going down that path of treachery, it will not lead anywhere good!

A long overdue face-flit

After many moons of rolling with the same super basic design of this blog, I finally decided that it was about time to give it a well deserved face-lift as well as migrate it back to Jekyll.

A number of years ago I was looking for an excuse to write something non-trivial in Python and the result of that was the static site generator, that I eventually ended up calling doxter.

Once doxter was ready for prime-time I migrated this blog from Jekyll to it. And now the natural question is why migrate away from it and back to Jekyll again? Since that time Jekyll and GitHub made a wide array of changes, and GitHub Pages got even better, therefore I felt like going native again would be good idea.

It remains to be seen if/when I’ll regret this decision in the months to come.

doxter is still out there and perfectly usable. It withstood the test of time, even going from python 2.x to 3.x without any backbreaking changes or fuss, which is isn’t something totally unexpected, considering the fact that I am the second best programmer that has ever lived. I’ll let you discover who is the very first one, but I am sure it has to do with a temple and an operating system of some description or another. What a weird combination, right? Totally!

In other, very much related news, decided to keep the RSS feed alive, despite the fact that many consider RSS to be long dead at this point in time. Of course, if you happen to be reading this in your favorite RSS reader, then the so called facelift that I’ve been babbling about above will make absolutely no sense to you, and perhaps that is how things should be in life, wouldn’t you agree?

Also, I took the time and made the blog more mobile friendly, so it is now enjoyable on your extremely precious mobile phone or tablet, even though it’s highly unlikely that one would be consuming anything I write on this blog that way.

And now without any further ado, let us take a deep dive into the actual projects and experiments that I have hacked on during the course of this rather ghastly past year.

WoWiconify

WoWiconify was the result of some super duper impromptu coding session, without too much thought and thinking put into it, but the results turned out to be fairly decent and interesting.

Let me loop and ease you into what it actually is, and start the conversation off with a couple of images, which I am told they are supposed to be worth not less and not a whole lot more than exactly a thousand words.

Considering this was told to me before NFTs were a thing, I consider it one of the few core truths of our existence.

Input

![]()

Output

![]()

What is going on? Well, it is a small command line tool that takes an input image and a number (up to a maximum of 512, which is a totally arbitrary limit) of spell icons from WoW (World of Warcraft), and produces an output image that uses those said spell icons to approximate the original input image.

It’s only a handful of lines of C and uses a couple of single file libraries by Sean Barett with no other dependencies.

Considering that this is merely a toy project, I do not provide any pre-compiled binaries, but if you want to check out the source code and perhaps even compile it yourself, then feel free to click right here.

Legend of Grimrock 2: Multiplayer uMod

That is a mouthful isn’t it?

Last year in December the so called uMod support has landed in a special nutcracker beta branch of Legend of Grimrock 2 on Steam, in the form of a Lua source code drop and the ability to execute Lua in an unsandboxed fashion, which allows mods more or less unrestricted access to the main Lua state and can combined with the source code drop can be used to monkey-patch almost anything within the game.

This also allows one to create a completely new game in an entirely different genre. In other words, the possibilities are truly endless in terms of what one could do with it, so long all changes/modifications still abide by the modding and asset usage terms.

I knew about this and I took a quick glance at it right when it was dropped by Petri Häkkinen from Almost Human, but then as the new year came about it completely fell of my radar and forgot about it.

Since I take a yearly sabbatical every year in December, by taking the entire month off, I decided to take another look at it and see if I could leverage it to add some sort of drop-in co-op multiplayer support, which would allow two players to play both the main official campaign of the game as well as the myriad of user created custom dungeons. Obviously this doesn’t exclude the possibility of creating more co-op multiplayer focused dungeons either, it’s just that it must work with existing content.

After a few days of rather intense experimentation I came up with what can be seen in the video below.

I am not going to go into any of the technical details, nor release any of the underlying code just yet. Why? For two very good and perfectly valid reasons, which I am going to unpack below.

First of all, I don’t want to get ahead of myself until I am absolutely certain that I can pull this off in the manner that I just described above.

And second, the nutcracker beta branch of the game that enables uMod support is still kept hush-hush and low profile by the developers for the time being, and I don’t want to unnecessarily rock the proverbial boat just yet so to speak.

Besides, the entire journey will warrant a proper brain-dump type of a technical post, where I intend to take a deep dive into how it was all done, considering that there’s a treasure trove of things to talk about.

Fit for Autopsy

One of my favorite pastime activities is taking an existing code-base and attempting to port it to the web via the means of WASM by leveraging Emscripten.

This year’s victim happened to be Fit for Autopsy, which is a demopack or demo anthology by Fit.

It seemed like a natural fit, pun intended, and was already portable and compile-able on a number of platforms.

Now it did had a few remnants of good old fashioned OpenGL fixed function pipeline in some of the renderer, which I hoped that I could just keep as-is and have the fixed function pipeline emulation present in Emscripten handle.

Well, it kind of worked, but it definitely doesn’t render everything correctly, which means that I’ll have to rewrite some of the renderer, which is not too bad.

You can watch a very much work-in-progress-wasm-port version of the demo called Boy from the demopack over here.

Another WASM/Emscripten related project that I hacked on was a small tile-map

editor written in C and using only the 2D <canvas> context to draw everything

without utilizing WebGL directly.

Before you ask, for this particular experiment I ended up borrowing the assets from Catacomb Snatch.

I would also really like to port something of a higher or rather bigger caliber like Penumbra Overture or Shadowgrounds, but I have not managed to work up the courage just yet, considering that both of those would require a complete rewrite of their renderer and more before they could be even considered for porting anywhere.

js13kGames

The yearly [js13kGames][js13k] competition is part and parcel of my yearly collection of well defined rituals. This year hasn’t been any different, and I attempted to come up with something that is not totally uninteresting, yet it can fit into a paltry 13 kilo bytes after being compressed.

Unlike other years, I did manage to come up with a fairly decent idea in my honest and humble opinion, but like always didn’t manage to push it over the finish line, due to lack of time, etc. One day!

The idea itself, while not anything super revolutionary, but a tiny procedurally generated space gardening game, where the player has to tend to the plants on the planet and collect enough raw material to open the teleporter, which takes them to the next randomly generated planet. All this while fending off a flying enemy that also wants and likes the raw materials and desperately would like to collect them; also whenever it encounters the player, it will attempt to steal its raw materials as well as water, which is a scarce resource.

Perhaps, I’ll come back to this idea and turn it into reality one day, given that nobody beats me to it before, that is!

QOI

A couple of weeks ago, Dominic Szablewski has announced a completely new image file format called QOI, which needless to say has gotten quite a lot of attention as well as traction among like minded peoples of the internet.

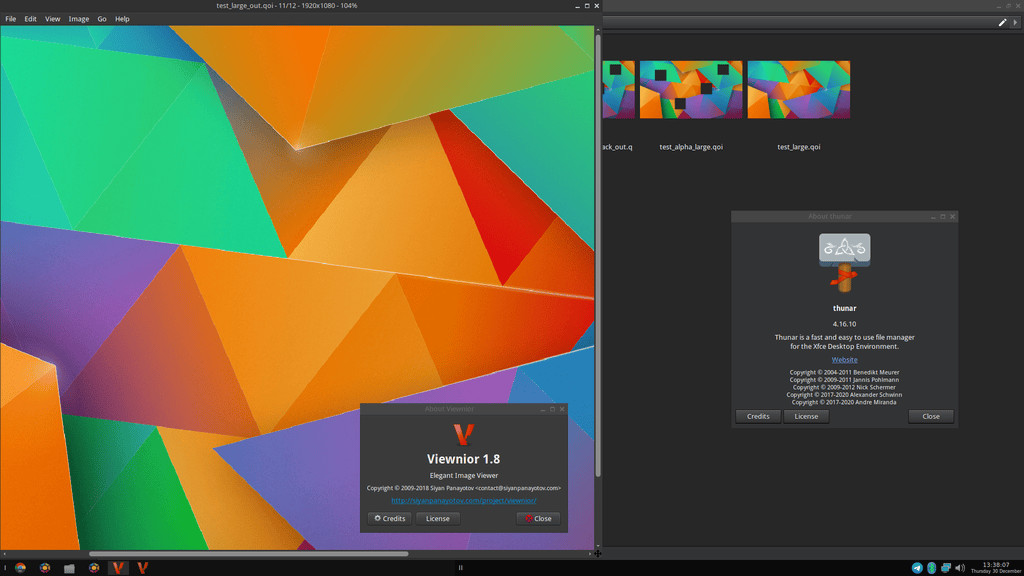

I didn’t want to miss such an historic moment, the birth of a completely new image file format is quite a big event. So, as any good law abiding citizen would, I decided to make my contribution in the form of a so called gdk pixbuf loader, the source code of which can be found over here.

This allows existing GTK applications to load/save and generate thumbnails for images in the QOI image format, which turns the format into more of a first class citizen in the world of applications built with GTK; which is a significant amount of applications in the linux ecosystem.

As this was thrown together in mere three days it definitely needs some rigorous testing, and there might be some bugs lurking in the shadows due to perhaps not handling some corner cases, but all in all it’s usable and gets the job done.

Building loaders is totally under-documented and an obscure process, it made me realize how arcane and in many ways broken this entire system is. It’s to the point of requiring its very own separate and dedicated post in order to fully document and chronicle my experience, but that’s a story for another time.

Gemini on the Go

I mentioned Go during my 2020 retrospective and expressed my desire to write something non-trivial using it in order to be able to evaluate its merits, weaknesses and most importantly where and how it fits into my tool-belt.

Two months ago or so, I stumbled upon the Gemini internet protocol.

Gemini is a new internet protocol which:

- Is heavier than gopher

- Is lighter than the web

- Will not replace either

- Strives for maximum power to weight ratio

- Takes user privacy very seriously

What shocked me the most is that I never heard about it until that very moment in time.

Just like with QOI, it’s not an very day event to witness the birth of a brand new internet protocol like this, so once again I wanted to leave my mark and make my contribution.

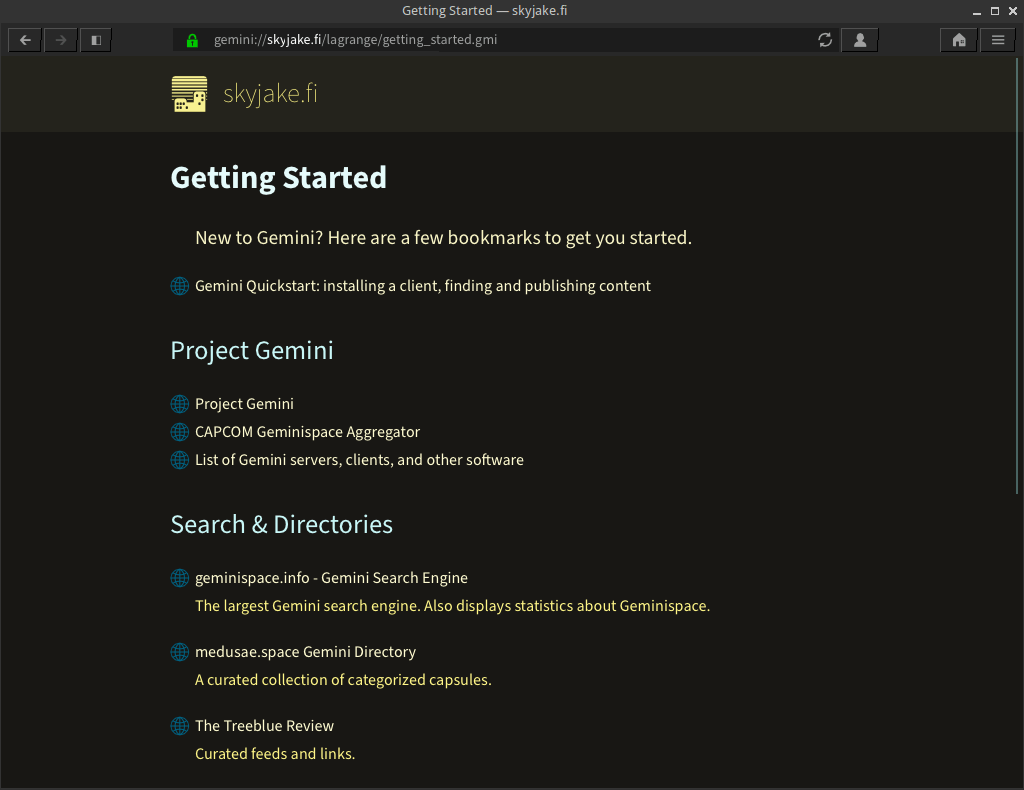

As a result, I started building a very tiny Gemini server and client combination in the form a command line utility in Go.

Once it’s ready, will use it to power and run my own Gemini capsule, which I intend to host on a Digital Ocean droplet.

This is really the perfect type of an application for Go, and will allow me to delve into some of the flagship features that set Go apart, like Goroutines, networking and so on.

You can expect a dedicated post in which I will wholeheartedly dissect my experience and perhaps even shell out some totally unsolicited advice for good measure.

Aren’t there plenty if existing Gemini servers and clients? Oh, there are plenty, in fact one might go as far as saying that there’s rather healthy and active ecosystem built around it already.

One of my favorite GUI based Gemini clients’ is Lagrange.

The word client might be a misnomer, browser is probably a way better term to describe it.

A great question that you must be asking yourself right now is who exactly might be the target audience of such a niche internet protocol? And that is an extremely good question, which I’ll try to explain in the way I see it.

From where I stand, there are three distinct groups of peeps using Gemini at this point in time.

First, let’s just call the them the old timers, the ones who got to live through the hey-day of BBSes or at least were teenagers or perhaps young adults during those golden days of glory. They always reminisce those days and have an ardent desire to relive them in one way or another. Need not to be said that this group is totally unsatisfied and annoyed by what the so called modern web has become.

The second group, which I proudly include myself in, is the group that missed the BBS days or got only a minuscule taste of them due to timing and/or other reasons and always longed to experience it in its almost raw form.

Last but, not least, the third group is composed of children of the modern world, the outcasts, desperately seeking a quiet place away from the neon lights of the metropolis; for them, something like Gemini is like sanctuary and invokes a feeling that is somewhat weirdly familiar, but totally foreign at the same time.

The great ZIGgurat

Zig is yet another contender in the relatively new and very shiny languages that ought to replace C/C++ in one form or another within our natural life times.

I am not terribly interested or impressed by the language itself. How should I put it best? It’s way too avant-garde for my humble needs and desires when it comes to languages.

However, this is not a critique of the merits or shortcomings of the language itself, but rather the toolchain that powers it under the hood.

As most languages these days Zig leverages the LLVM toolchain (which clang is part of) to do most of the heavy lifting when it comes to bootstrapping and so forth.

As a direct result of this, it comes with a fully functional C/C++ compiler hidden under the hood. Well, hidden until Zig’s creator, a good lad known as Andrew Kelley, decided to pull off the hood and expose it in the form of zig cc.

zig cc is a wrapper over the LLVM toolchain under the hood and provides some additional features on top that aren’t available if one would go with the native toolchain.

This is all fine and dandy, however before one could use it a true drop-in-replacement for gcc and/or clang it still needed at least two more things in my book.

First, it needed ar to be exposed as well in order for one to be able to

create static libraries and then a windows resource file compiler,

colloquially known as windres in the world of mingw based cross compiler.

ar was eventually exposed as zig ar, which left windres as the

last piece of the puzzle.

Once again as a citizen of the free world, I took upon myself the task of

adding windres, unfortunately I failed to boostrap the zig compiler itself

and after a few days I set it aside and put this project into the back-burner.

There have been a few zig releases since then and I intend to take another stab at it during the course of next year. But once again, I shall not many any premature promises!

Living life on a Vim

Give a man a Vim plugin and you solve one of his problems. Teach a man how to write Vim plugins and he’ll write one to solve every single one of his problems. I don’t remember exactly how that goes, but it should be something that is pretty close to those lines.

I always tell people who are considering ramping up and switching to Vim is that unlike with other editors the entire thing is a process and it cannot happen over night, due to every single one of us having a quite different as well as needs in order to be what we ultimately end up calling productive.

In my any ways it’s as much as about the journey as it is about the destination, in terms of identifying some sort of an inefficiency in your very own workflow and way of using vim and then looking for a way to correct it. Just like with most things that matter in life, there’s always a better way and that happens to be no different when it comes to Vim.

[Bram Moolenaar] the creator and benevolent dictator for life of Vim, happens to have a very good presentation touching upon this very subject of identifying one’s inefficiencies, finding better ways and then turning those into habits.

It’s quite dated at this point, but everything said in there still holds up pretty well to this very day.

You might be thinking that you didn’t sign up for a lecture about Vim when you started reading this post and here I am preaching you like a true evangelist, gospels about effective text editing in Vim.

Fear not, that is not my intention, I just want to give an update or two about about some changes in my own and very personal Vim world.

I made some improvements to my Vim plugin called fastopen, which allows one to fuzzy search and open files within a directory by leveraging dmenu and Git if the user happens to be editing within the confines of a Git repository. With these changes in place the root of the repository is now properly detected no matter from which sub-directory the user might have started Vim to edit a particular set of files.

Ended up improving the Git repository detection, for which of course I rely on the presence of the excellent Git integration Vim plugin called fugitive by none other than the absolute legend himself, talking about Tim Pope of course. He’s probably one of (if not) the most prolific Vim plugin authors out there.

Now that Vim 8 has been out there for a while, I’d like to make an experiment and see if I could use the built-in popup menu support to display the list of files instead of shelling out to an external tool like dmenu, but that’s a experiment for another day.

Speaking of dmenu, it also happens to be yet another utility on my apparently ever growing list of tools that I’d very much like to replace with my own.

Fantastic Urges and How to Control Them

No, this is not a new novel fresh out of the oven by our beloved J.K Rowling, nor it is a campaign by PornHub, but rather me resisting the urge of writing my very own X11 window manager among other things, from scratch no less.

In 2020 I decided that enough is enough and wrote my very own terminal emulator from scratch called marmota. I didn’t go completely off the hook and ended up using vte under the hood, but everything around it in terms of features and capabilities has been built keeping my own particular and not so particular needs in mind.

Why would I do that? I grew sick and tired of the constant breakage, ad-hoc feature addition, removals or simply changes in existing behavior of the existing terminal emulators out there, many of whom do use vte under the hood as well.

So, I’ve been using it without a hitch until earlier this year, when after an update, miraculously my fonts were no longer being hinted properly in the terminal.

At first I though that it must be some distribution level change that has happened and perhaps they re-calibrated the hinting priorities and such and thought that it will get addressed in no time.

However, as time went on, new updates were flowing in but my fonts were in the terminal were still looking absolutely horrendous. Now, I was starting to get suspicious a little bit and after some digging managed to locate the culprit.

It was indeed a change in vte itself, which has been partially reverted, but never ever restored the previous totally sane and valid behavior.

And now here I am contemplating ditching vte and rolling my own, which is a ridiculous thing to do, I admit as much and potentially quite a bit of work, but I am at an age where I can no longer take such absolute nonsense.

And what about writing my X11 window manager? Well, considering that I already rolled my own terminal, might as well ditch my current window manager which happens to be Openbox and roll my own.

That would make me only one or two steps away from my own distribution. I guess we’ll have to live and see what the future holds and where the four winds take us.

Oh, with all the commotion I almost forgot about the glorious day when all of the sudden my GNOME Keyring broke due to a change in the name of security to GLib itself.

With all that said the natural and rather ugly question does rear its ugly head. Can you guess what that question is?

Could 2021 misterously been the year of the Linux Desktop at last?

The answer to that rather most outrageous and ridiculous question according to ancient atrolinux theorists is a resounding and most definite NO.

Let us just leave it at that, shall we? Some things are better to be left unspoken.

GNOME dēlenda est!

P.I.M.P My Desktop

It’s been a while since I made any major changes to the look and feel of my desktop. It is a sign of my age? Perhaps.

After more than a decade I decided to switch my cursor theme from Comix Cursors to Bibata and I’m more than happy to report that I’ve never been happier.

As far as monospaced fonts suitable for coding are concerned, I celebrated my second year anniversary with the rather excellent JetBrains Mono.

The End

This is the end. I do hope that some of this resonated with it and if it did you can find the paid extended version below by subscribing to my onlyfarmers profile.

Not a big fan of new years’ resolutions myself, which is not terribly surprising considering that fact that I am not really a big tea person myself.

Nevertheless, it seems appropriate to end this rather lengthy retrospective with some sort of a list of it-would-be-nice-to-do for the rather ghastly upcoming year:

- have some showcase-able builds of OLEN Build and OLEN Engine

- continue working on the Legend of Grimrock 2 multiplayer uMod

- live stream some coding sessions at least twice a week on Twitch; during the weekend of course

- upload the VODs of said streams to my YouTube channel in order to start building an audience over there

- reach a 1000 followers on Twitter

- write a retrospective post every single month; no excuses or exceptions

- get married and have quadruplets

Until next time as the great and eternal server once said to me: END OF LINE.

P.S: Make sure that you do not have a patchy log server somewhere in your basement.

P.S.S: If you didn’t get that joke, it is time for you to retire and pick up gardening or carpeting.

2021-12-31 / retrospective